Open WebUI – 本地化部署大模型仿照 ChatGPT用户界面

Open WebUI 是一个仿照 ChatGPT 界面,为本地大语言模型提供图形化界面的开源项目,可以非常方便的调试、调用本地模型。你能用它连接你在本地的大语言模型(包括 Ollama 和 OpenAI 兼容的 API),也支持远程服务器。本文给出了具体操作实践,给出了遇到的问题及解决方案。

Open WebUI介绍:

Open WebUI 是一个仿照 ChatGPT 界面,为本地大语言模型提供图形化界面的开源项目,可以非常方便的调试、调用本地模型。你能用它连接你在本地的大语言模型(包括 Ollama 和 OpenAI 兼容的 API),也支持远程服务器。Docker 部署简单,功能非常丰富,包括代码高亮、数学公式、网页浏览、预设提示词、本地 RAG 集成、对话标记、下载模型、聊天记录、语音支持等。

GitHub:GitHub - open-webui/open-webui: User-friendly WebUI for LLMs (Formerly Ollama WebUI)

功能:

💻 直观界面:我们的聊天界面深受ChatGPT启发,旨在确保用户获得友好易用的体验。

📱 响应式设计:无论是在桌面电脑还是移动设备上,都能享受一致而流畅的用户体验。

⚡ 迅捷响应速度:畅享快速且高效的响应性能。

🚀 轻松启动:采用Docker或Kubernetes(通过kubectl、kustomize或helm工具)实现无缝安装,带给您无烦恼的初始化体验。

✅ 代码语法高亮显示:得益于我们的语法高亮功能,您可以享受到更为清晰易读的代码展示效果。

✍️数字化写作 ✂️ 全面支持Markdown和LaTeX:借助全面集成的Markdown和LaTeX功能,全面提升您的LLM互动体验。

📚 本地RAG集成:步入未来聊天交互的新篇章,我们内建了Retrieval Augmented Generation(RAG)支持,让您能够将文档操作无缝融合进聊天流程。只需简单地将文档载入聊天或添加文件至文档库,然后通过#命令即可轻松访问文档内容。此功能目前尚处于alpha测试阶段,我们正不断改进和完善,以确保其稳定性和性能表现达到最优。

总结一下,重点理解为如下三点:

- Open WebUI 是一个多功能且直观的开源用户界面,与 ollama 配合使用,它作为一个webui,为用户提供了一个私有化的 ChatGPT 体验。

- Open WebUI 集成了 Retrieval Augmented Generation(RAG)技术,允许用户将文档、网站和视频等作为上下文信息,供 AI 在回答问题时参考,以提供更准确的信息。

- 通过调整 Top K 值和改进 RAG 模板提示词来提高基于文档的问答系统的准确性。

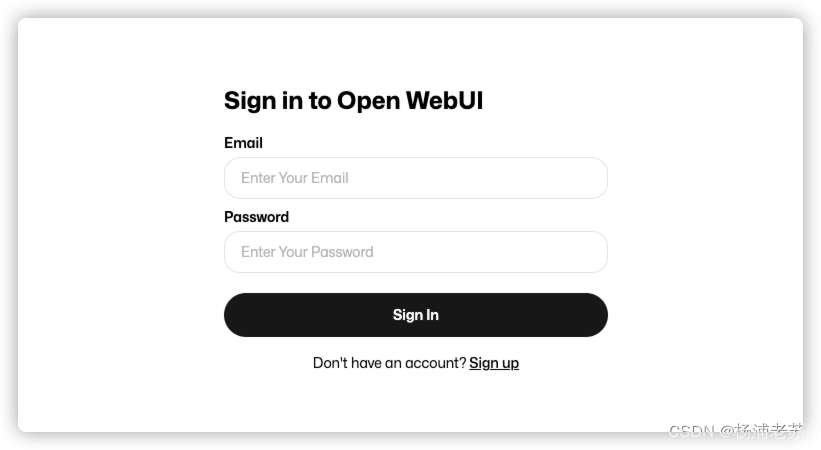

Q:关于Open WebUI的安全性,尤其是第一次使用还需要注册,注册信息到哪里去了?

open-webui是一个用于构建Web用户界面的开源库,它通常不直接处理数据传输,而是作为前端框架与后端服务器之间的中介。

第一次使用注册信息,是要求您注册成为管理员用户。这确保了如果Open WebUI被外部访问,您的数据仍然是安全的。

需要注意的是,所有东西都是本地的。我们不收集您的数据。当您注册时,所有信息都会留在您的服务器中,永远不会离开您的设备。

您的隐私和安全是我们的首要任务,确保您的数据始终处于您的控制之下。

Q: Why am I asked to sign up? Where are my data being sent to?

A: We require you to sign up to become the admin user for enhanced security. This ensures that if the Open WebUI is ever exposed to external access, your data remains secure. It's important to note that everything is kept local. We do not collect your data. When you sign up, all information stays within your server and never leaves your device. Your privacy and security are our top priorities, ensuring that your data remains under your control at all times.

Open WebUI安装:

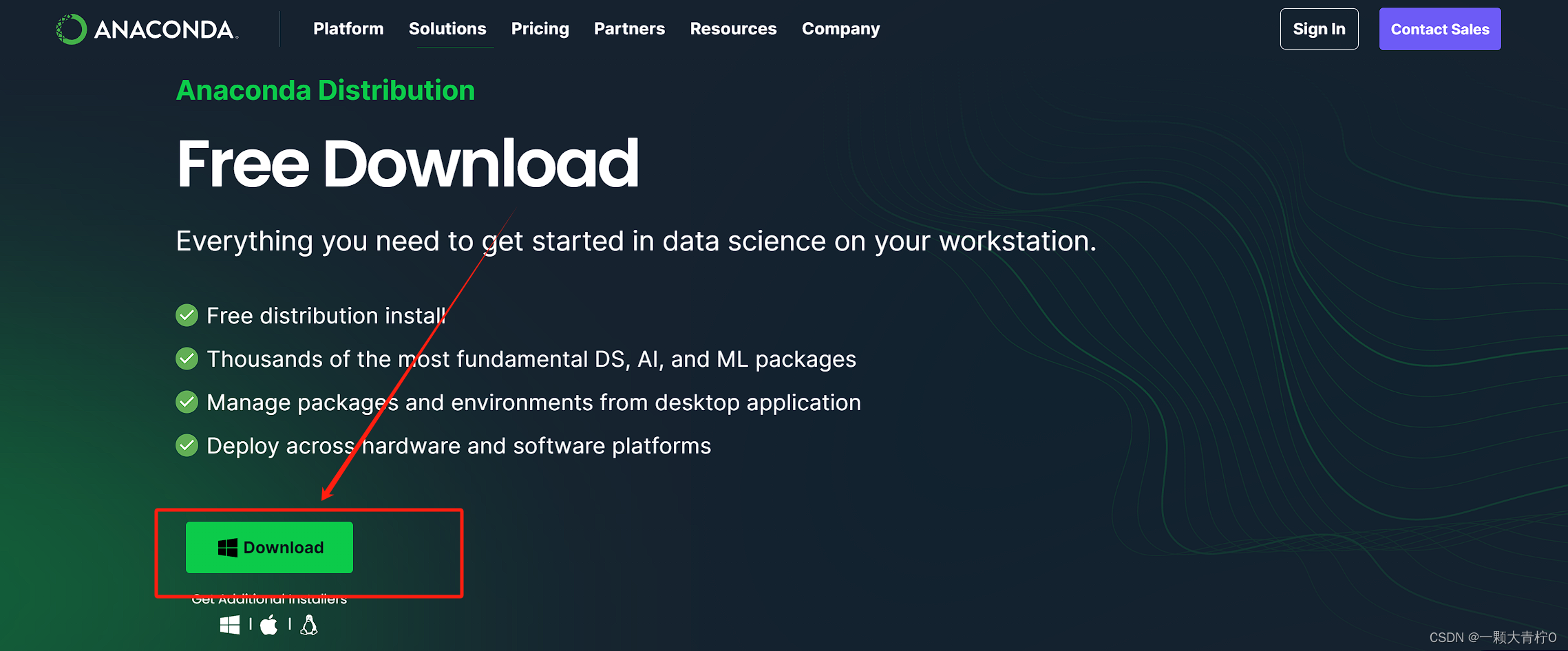

目前我只在linux环境下,做了安装实践, 在安装过程中,我重点参考了csdn上的这篇文章:

linux环境安装参考: ollama+open-webui,本地部署自己的大模型

操作步骤很详细,包括安装过程中遇到的报错问题,基本上都可以按照文章中的步骤,逐步执行解决。

还有一篇文章,写的也很详细,如果是windows下安装,建议参考下:

windows环境安装参考:本机部署大语言模型:Ollama和OpenWebUI实现各大模型的人工智能自由

另外,我使用的centos系统,在安装过程中遇到如下错误:

(open-webui) [root@master open-webui]# npm i

node: /lib64/libm.so.6: version `GLIBC_2.27' not found (required by node)

node: /lib64/libstdc++.so.6: version `GLIBCXX_3.4.20' not found (required by node)

node: /lib64/libstdc++.so.6: version `CXXABI_1.3.9' not found (required by node)

node: /lib64/libstdc++.so.6: version `GLIBCXX_3.4.21' not found (required by node)

node: /lib64/libc.so.6: version `GLIBC_2.28' not found (required by node)

node: /lib64/libc.so.6: version `GLIBC_2.25' not found (required by node)

解决办法:node: /lib64/libm.so.6: version `GLIBC_2.27‘ not found问题解决方案_libm.so.6 glibc2.27-CSDN博客

另外,open-webui安装过程中,需要连接'https://huggingface.co' 网站,会报错无法连接。

我通过简单设置环境变量解决:export HF_ENDPOINT=HF-Mirror

下面给出了我在conda虚拟环境创建完后,并且npm也安装ok后,open-webui的安装执行过程快照,仅供参考:

(base) [root@master backend]# conda activate open-webui

(open-webui) [root@master backend]# bash start.sh

No WEBUI_SECRET_KEY provided

Loading WEBUI_SECRET_KEY from .webui_secret_key

USER_AGENT environment variable not set, consider setting it to identify your requests.

No sentence-transformers model found with name sentence-transformers/all-MiniLM-L6-v2. Creating a new one with mean pooling.

Traceback (most recent call last):

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/urllib3/connection.py", line 196, in _new_conn

sock = connection.create_connection(

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/urllib3/util/connection.py", line 85, in create_connection

raise err

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/urllib3/util/connection.py", line 73, in create_connection

sock.connect(sa)

OSError: [Errno 101] Network is unreachable

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/urllib3/connectionpool.py", line 789, in urlopen

response = self._make_request(

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/urllib3/connectionpool.py", line 490, in _make_request

raise new_e

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/urllib3/connectionpool.py", line 466, in _make_request

self._validate_conn(conn)

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/urllib3/connectionpool.py", line 1095, in _validate_conn

conn.connect()

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/urllib3/connection.py", line 615, in connect

self.sock = sock = self._new_conn()

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/urllib3/connection.py", line 211, in _new_conn

raise NewConnectionError(

urllib3.exceptions.NewConnectionError: <urllib3.connection.HTTPSConnection object at 0x7f8572048eb0>: Failed to establish a new connection: [Errno 101] Network is unreachable

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/requests/adapters.py", line 667, in send

resp = conn.urlopen(

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/urllib3/connectionpool.py", line 843, in urlopen

retries = retries.increment(

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/urllib3/util/retry.py", line 519, in increment

raise MaxRetryError(_pool, url, reason) from reason # type: ignore[arg-type]

urllib3.exceptions.MaxRetryError: HTTPSConnectionPool(host='huggingface.co', port=443): Max retries exceeded with url: /sentence-transformers/all-MiniLM-L6-v2/resolve/main/config.json (Caused by NewConnectionError('<urllib3.connection.HTTPSConnection object at 0x7f8572048eb0>: Failed to establish a new connection: [Errno 101] Network is unreachable'))

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/huggingface_hub/file_download.py", line 1722, in _get_metadata_or_catch_error

metadata = get_hf_file_metadata(url=url, proxies=proxies, timeout=etag_timeout, headers=headers)

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/huggingface_hub/utils/_validators.py", line 114, in _inner_fn

return fn(*args, **kwargs)

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/huggingface_hub/file_download.py", line 1645, in get_hf_file_metadata

r = _request_wrapper(

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/huggingface_hub/file_download.py", line 372, in _request_wrapper

response = _request_wrapper(

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/huggingface_hub/file_download.py", line 395, in _request_wrapper

response = get_session().request(method=method, url=url, **params)

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/requests/sessions.py", line 589, in request

resp = self.send(prep, **send_kwargs)

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/requests/sessions.py", line 703, in send

r = adapter.send(request, **kwargs)

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/huggingface_hub/utils/_http.py", line 66, in send

return super().send(request, *args, **kwargs)

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/requests/adapters.py", line 700, in send

raise ConnectionError(e, request=request)

requests.exceptions.ConnectionError: (MaxRetryError("HTTPSConnectionPool(host='huggingface.co', port=443): Max retries exceeded with url: /sentence-transformers/all-MiniLM-L6-v2/resolve/main/config.json (Caused by NewConnectionError('<urllib3.connection.HTTPSConnection object at 0x7f8572048eb0>: Failed to establish a new connection: [Errno 101] Network is unreachable'))"), '(Request ID: 430abcfa-0ffb-419d-a853-40caed43b5c8)')

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/transformers/utils/hub.py", line 399, in cached_file

resolved_file = hf_hub_download(

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/huggingface_hub/utils/_validators.py", line 114, in _inner_fn

return fn(*args, **kwargs)

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/huggingface_hub/file_download.py", line 1221, in hf_hub_download

return _hf_hub_download_to_cache_dir(

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/huggingface_hub/file_download.py", line 1325, in _hf_hub_download_to_cache_dir

_raise_on_head_call_error(head_call_error, force_download, local_files_only)

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/huggingface_hub/file_download.py", line 1826, in _raise_on_head_call_error

raise LocalEntryNotFoundError(

huggingface_hub.utils._errors.LocalEntryNotFoundError: An error happened while trying to locate the file on the Hub and we cannot find the requested files in the local cache. Please check your connection and try again or make sure your Internet connection is on.

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/root/miniconda3/envs/open-webui/bin/uvicorn", line 8, in <module>

sys.exit(main())

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/click/core.py", line 1157, in __call__

return self.main(*args, **kwargs)

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/click/core.py", line 1078, in main

rv = self.invoke(ctx)

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/click/core.py", line 1434, in invoke

return ctx.invoke(self.callback, **ctx.params)

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/click/core.py", line 783, in invoke

return __callback(*args, **kwargs)

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/uvicorn/main.py", line 410, in main

run(

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/uvicorn/main.py", line 577, in run

server.run()

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/uvicorn/server.py", line 65, in run

return asyncio.run(self.serve(sockets=sockets))

File "/root/miniconda3/envs/open-webui/lib/python3.8/asyncio/runners.py", line 44, in run

return loop.run_until_complete(main)

File "uvloop/loop.pyx", line 1517, in uvloop.loop.Loop.run_until_complete

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/uvicorn/server.py", line 69, in serve

await self._serve(sockets)

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/uvicorn/server.py", line 76, in _serve

config.load()

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/uvicorn/config.py", line 434, in load

self.loaded_app = import_from_string(self.app)

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/uvicorn/importer.py", line 19, in import_from_string

module = importlib.import_module(module_str)

File "/root/miniconda3/envs/open-webui/lib/python3.8/importlib/__init__.py", line 127, in import_module

return _bootstrap._gcd_import(name[level:], package, level)

File "<frozen importlib._bootstrap>", line 1014, in _gcd_import

File "<frozen importlib._bootstrap>", line 991, in _find_and_load

File "<frozen importlib._bootstrap>", line 975, in _find_and_load_unlocked

File "<frozen importlib._bootstrap>", line 671, in _load_unlocked

File "<frozen importlib._bootstrap_external>", line 843, in exec_module

File "<frozen importlib._bootstrap>", line 219, in _call_with_frames_removed

File "/root/open-webui/backend/main.py", line 25, in <module>

from apps.rag.main import app as rag_app

File "/root/open-webui/backend/apps/rag/main.py", line 85, in <module>

embedding_functions.SentenceTransformerEmbeddingFunction(

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/chromadb/utils/embedding_functions.py", line 83, in __init__

self.models[model_name] = SentenceTransformer(

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/sentence_transformers/SentenceTransformer.py", line 299, in __init__

modules = self._load_auto_model(

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/sentence_transformers/SentenceTransformer.py", line 1324, in _load_auto_model

transformer_model = Transformer(

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/sentence_transformers/models/Transformer.py", line 53, in __init__

config = AutoConfig.from_pretrained(model_name_or_path, **config_args, cache_dir=cache_dir)

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/transformers/models/auto/configuration_auto.py", line 934, in from_pretrained

config_dict, unused_kwargs = PretrainedConfig.get_config_dict(pretrained_model_name_or_path, **kwargs)

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/transformers/configuration_utils.py", line 632, in get_config_dict

config_dict, kwargs = cls._get_config_dict(pretrained_model_name_or_path, **kwargs)

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/transformers/configuration_utils.py", line 689, in _get_config_dict

resolved_config_file = cached_file(

File "/root/miniconda3/envs/open-webui/lib/python3.8/site-packages/transformers/utils/hub.py", line 442, in cached_file

raise EnvironmentError(

OSError: We couldn't connect to 'https://huggingface.co' to load this file, couldn't find it in the cached files and it looks like sentence-transformers/all-MiniLM-L6-v2 is not the path to a directory containing a file named config.json.

Checkout your internet connection or see how to run the library in offline mode at 'https://huggingface.co/docs/transformers/installation#offline-mode'.

(open-webui) [root@master backend]# export HF_ENDPOINT=https://hf-mirror.com

(open-webui) [root@master backend]# bash start.sh

No WEBUI_SECRET_KEY provided

Loading WEBUI_SECRET_KEY from .webui_secret_key

USER_AGENT environment variable not set, consider setting it to identify your requests.

modules.json: 100%|██████████████████████████████████████████████████████████████████████████████████████| 349/349 [00:00<00:00, 95.8kB/s]

config_sentence_transformers.json: 100%|█████████████████████████████████████████████████████████████████| 116/116 [00:00<00:00, 25.3kB/s]

README.md: 10.7kB [00:00, 19.1MB/s]

sentence_bert_config.json: 100%|███████████████████████████████████████████████████████████████████████| 53.0/53.0 [00:00<00:00, 25.2kB/s]

config.json: 612B [00:00, 283kB/s]

model.safetensors: 100%|█████████████████████████████████████████████████████████████████████████████| 90.9M/90.9M [00:16<00:00, 5.67MB/s]

tokenizer_config.json: 100%|█████████████████████████████████████████████████████████████████████████████| 350/350 [00:00<00:00, 71.3kB/s]

vocab.txt: 210kB [00:17, 30.4kB/s]Error while downloading from https://hf-mirror.com/sentence-transformers/all-MiniLM-L6-v2/resolve/main/vocab.txt: HTTPSConnectionPool(host='hf-mirror.com', port=443): Read timed out.

Trying to resume download...

vocab.txt: 232kB [00:00, 24.2MB/s]

vocab.txt: 214kB [00:29, 7.34kB/s]

tokenizer.json: 466kB [00:03, 155kB/s]

special_tokens_map.json: 100%|███████████████████████████████████████████████████████████████████████████| 112/112 [00:00<00:00, 50.8kB/s]

1_Pooling/config.json: 100%|█████████████████████████████████████████████████████████████████████████████| 190/190 [00:00<00:00, 32.2kB/s]

INFO: Started server process [71959]

INFO: Waiting for application startup.

Intialized router with Routing strategy: simple-shuffle

Routing fallbacks: None

Routing context window fallbacks: None

Router Redis Caching=None

#------------------------------------------------------------#

# #

# 'It would help me if you could add...' #

# https://github.com/BerriAI/litellm/issues/new #

# #

#------------------------------------------------------------#

Thank you for using LiteLLM! - Krrish & Ishaan

Give Feedback / Get Help: https://github.com/BerriAI/litellm/issues/new

Intialized router with Routing strategy: simple-shuffle

Routing fallbacks: None

Routing context window fallbacks: None

Router Redis Caching=None

INFO: Application startup complete.

INFO: Uvicorn running on http://0.0.0.0:8080 (Press CTRL+C to quit)

更多推荐

已为社区贡献1条内容

已为社区贡献1条内容

所有评论(0)